RAID 5, RAID 6, and RAID 10 – When Scaling Storage Meets Physics

What happens when your data footprint hits 100TB? You can’t just mirror everything, your CFO would kill you. But striping it means playing Russian Roulette with your disks.

Redundancy is straightforward at small scale. It becomes statistical at large scale.

In Part 1, we explored RAID’s simplest forms: striping for speed and mirroring for reliability. Those two models solved early storage problems elegantly, but they shared a fundamental limitation. They scale poorly when storage grows beyond modest sizes.

Mirroring doubles hardware requirements. Striping multiplies failure risk.

Both become economically or operationally unsustainable when data grows into tens or hundreds of terabytes. That scaling problem forced storage engineers to introduce mathematics into redundancy.

Parity-based RAID was born from that necessity. Instead of duplicating data, parity RAID calculates recovery information on the fly, allowing the array to reconstruct missing data when a drive fails. On paper, it was the ultimate compromise between cost and reliability. In reality, it introduced a hidden layer of complexity that modern storage infrastructure is still struggling to survive.

The Economics That Forced RAID to Evolve

As data exploded in the early 2000s, IT departments hit a physical and financial wall. Mirroring (RAID 1) is great in theory, but when you need 100TB of usable space, realizing you have to buy, power, and cool 200TB of physical drives is a tough conversation to have with management.

Losing 50% of your raw capacity to redundancy is fine for a 500GB boot drive. It is an absolute budget-killer for a massive database or archival platform.

Parity-based RAID levels, particularly RAID 5 and RAID 6, solved this. Instead of keeping a lazy, 1-to-1 clone of every file, parity algorithms use math to spread “recovery instructions” across all the disks in the array.

The storage efficiency gains were massive. If you build a three-disk RAID 5 array, you only sacrifice one disk’s worth of space to protect the whole setup. Build a five-disk array? You’re still only losing one disk to parity. Suddenly, engineers were getting 80% usable capacity out of their hardware instead of 50%.

Parity RAID completely transformed storage economics. It also introduced hidden risks that only became visible as disks grew larger and workloads became more demanding.

RAID 5 – Elegant Mathematics With Fragile Edges

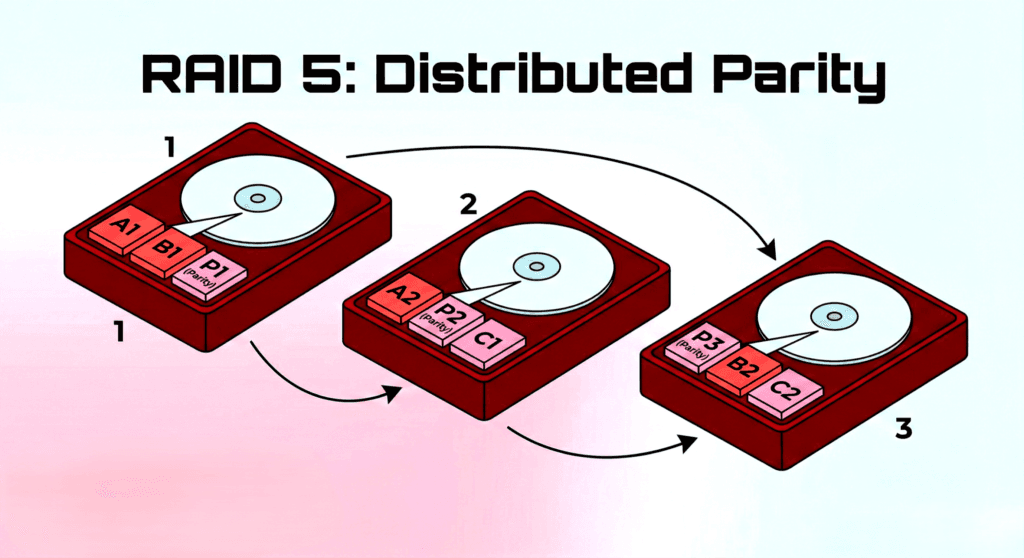

Instead of hoarding full copies of files, RAID 5 chops your data into blocks and spreads them across every disk in the array, along with a special block called “parity.”

Think of parity like simple algebra. If Drive A holds a 3 and Drive B holds a 2, the controller calculates the parity and stores a 5 on Drive C. If Drive A suddenly catches fire and fails, the system doesn’t panic. It looks at Drive B (2) and the Parity block (5), and instantly knows Drive A must have held a 3. It reconstructs the missing data on the fly.

On paper, RAID 5 appeared to solve the scaling problem. It offered redundancy without the cost of full duplication and performance without excessive waste.

Why RAID 5 Became the Undisputed Industry Standard

For over a decade, if you walked into a server room, almost every chassis you looked at was running RAID 5. It dominated the enterprise because it delivered a trifecta of wins:

- Massive Storage Efficiency: You only pay the “RAID tax” of a single drive, whether your array has three disks or eight.

- Blazing Read Speeds: Because the data is striped across multiple spindles, the system can pull file fragments from several disks simultaneously.

- The Safety Net: You can survive a catastrophic single-drive failure without taking the system offline or losing a single byte of production data.

If you were an IT director trying to stretch a hardware budget while keeping the infrastructure safe, RAID 5 wasn’t just a good option, it was the only option.

The Hidden Write Penalty

With mirroring (RAID 1), writing a file is simple: the controller just writes it to two disks simultaneously and moves on. Parity RAID isn’t that easy.

Because of that math we just talked about, every single time you save or update a file, the system suffers a four-step I/O penalty: read the old data, read the old parity, write the new data, and write the new parity (with the controller calculating the math in between)

In the infrastructure world, this is known as the infamous RAID Write Penalty.

If you are just dumping massive, sequential video files onto a backup server, the controller handles this gracefully and you might not even notice the lag. But if you throw a heavy, random-write workload at it like a highly active SQL database, that parity math will absolutely crush your IOPS (Input/Output Operations Per Second). Your read speeds will be fantastic, but your write speeds will hit a brick wall compared to a simple mirrored array.

Back in the days of “spinning rust” (traditional hard drives), the disks themselves were so mechanically slow that the controller’s math overhead was an acceptable trade-off. Today? Throw a modern, high-transaction container or database workload at a RAID 5 array, and that write penalty goes from a minor annoyance to a massive system bottleneck.

The Rebuild Time Crisis

The most serious weakness of RAID 5 did not become visible until disk capacities increased dramatically. Rebuilding a failed disk requires reading data from every surviving disk and recalculating parity across the entire array.

When enterprise drives were measured in hundreds of gigabytes, rebuilds often completed within hours. As capacities expanded into multi-terabyte and now tens-of-terabytes per disk, rebuild times stretched into days or longer.

During a rebuild, the array operates in degraded mode. All surviving disks must serve production I/O while simultaneously reconstructing the missing drive. This sustained load increases stress across the entire array and extends the vulnerability window.

If another disk fails during that window, RAID 5 cannot recover. The array is lost.

This is not a theoretical concern. It is a statistically predictable outcome as disk sizes grow and rebuild times lengthen.

Unrecoverable Read Errors and Probability Risk

Modern storage reliability introduces another constraint: Unrecoverable Read Errors (UREs).

Mechanical drives are not perfectly readable. Manufacturer specifications typically list a URE rate of 10¹⁴ bits read for standard enterprise SATA drives. In practical terms, that translates to approximately one unrecoverable read error for every 12–15 terabytes read.

Under normal operation, parity masks these events. The controller reconstructs the unreadable sector using redundancy, and the system continues without incident.

Rebuild conditions are different.

A RAID 5 rebuild requires reading every byte from every surviving disk. As array sizes grow into tens of terabytes per drive, the total volume of data read during rebuild can easily exceed the statistical threshold for encountering a URE.

If a degraded RAID 5 array encounters an unrecoverable read error during rebuild, there is no remaining redundancy to compensate. The reconstruction process fails, and data loss becomes likely.

This is not a rare edge case. It is a probability exposure that increases as disk sizes and array capacities expand.

Parity RAID extended the economics of storage. It also amplified the statistical risk inherent in large-scale disk recovery.

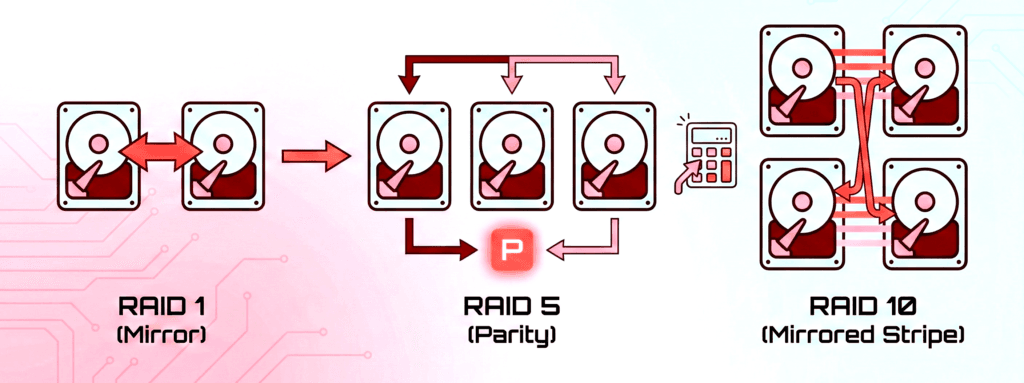

RAID 6 – Parity Attempts to Fix Parity

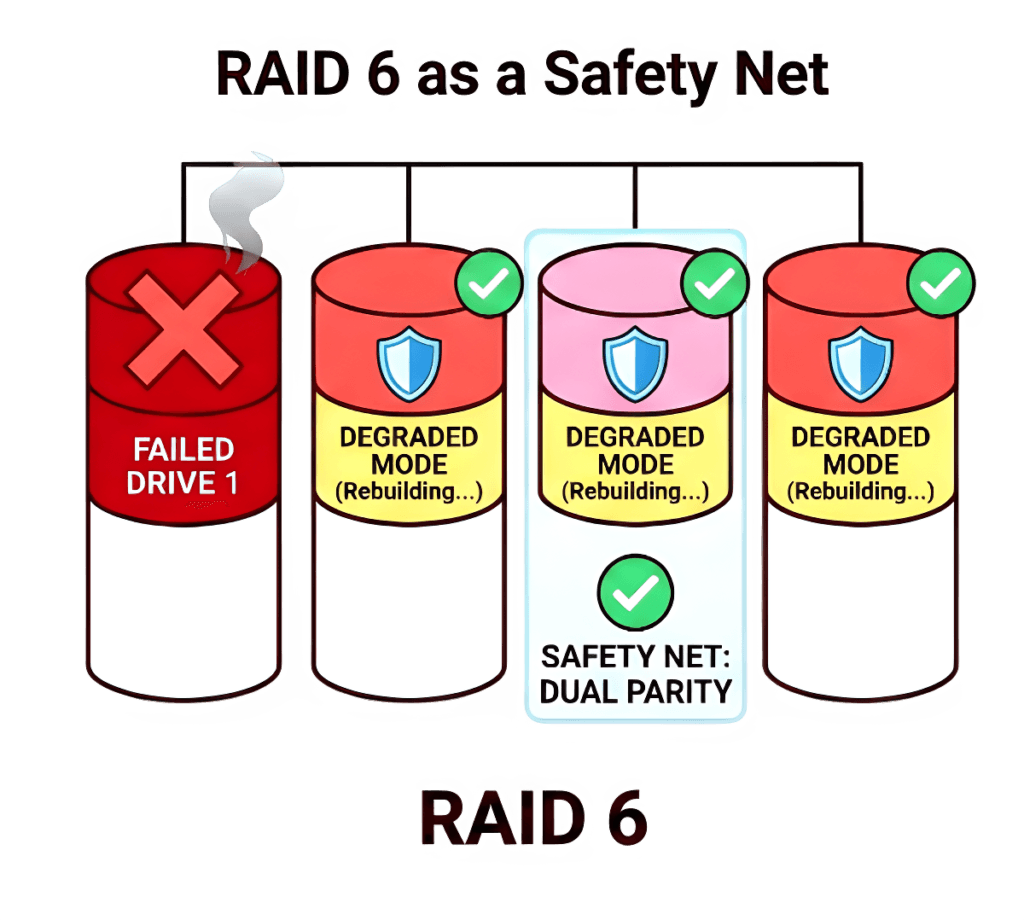

RAID 6 takes the exact same concept as RAID 5 but adds a second, independent layer of parity. That extra math buys you one massive advantage: the array can survive two simultaneous disk failures without dropping data.

Why RAID 6 Exists

It exists specifically to solve the rebuild time crisis. When you are reconstructing a massive drive over several days, the risk of a second drive failing or hitting a URE is uncomfortably high. Dual parity acts as your insurance policy. If a second disk dies during a rebuild, the array stays online.

From a data protection standpoint, RAID 6 is significantly safer than RAID 5 for high-capacity arrays. But from a performance standpoint, that safety comes with a bill. Calculating two sets of parity for every single write operation makes the RAID write penalty even heavier.

RAID 6 often incurs a six-I/O write penalty for small writes.

The Performance Trade-Off of Dual Parity

Each additional parity calculation increases write complexity. RAID 6 arrays often exhibit noticeably slower write performance than RAID 5 arrays.

If you are building a massive backup archive or a read-heavy file server, that trade-off is perfectly fine. But if you throw a high-transaction database at it, RAID 6 is going to crawl.

RAID 6 demonstrates a recurring theme in storage engineering. Improving reliability almost always introduces performance and complexity costs.

RAID 10 – When Engineers Stop Trusting Parity

While parity RAID dominated enterprise storage for years, engineers running high-performance workloads eventually got tired of the write penalties and agonizing rebuild times. The solution? Stop doing math and go back to brute force.

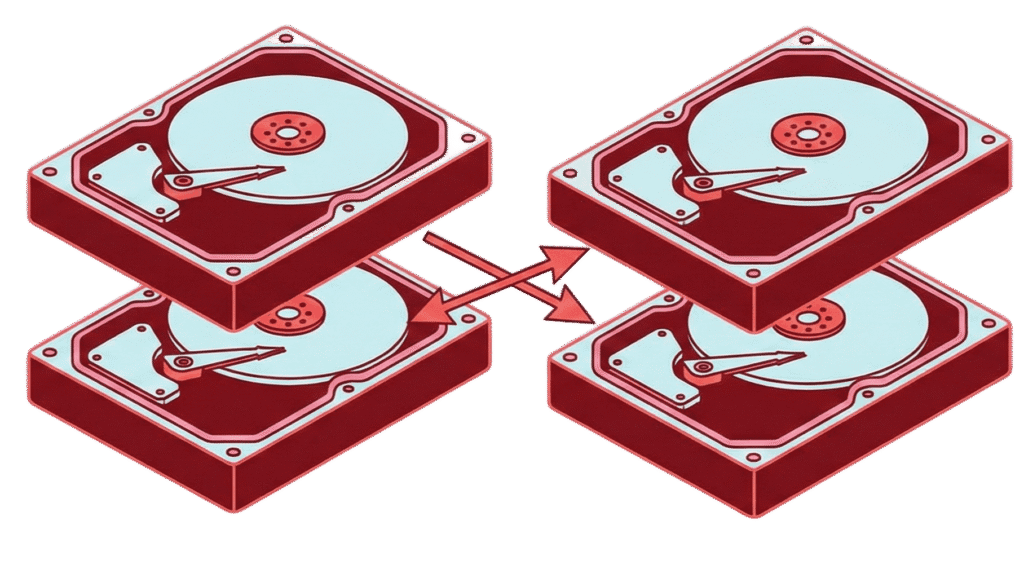

RAID 10 (literally RAID 1 + RAID 0) simply takes mirrored pairs of disks and stripes your data across them.

Yes, you instantly lose 50% of your raw storage capacity. You are back to buying twice the hardware you need. But for enterprise architects, sacrificing that space buys massive operational peace of mind.

Why RAID 10 Became the Operational Favorite

Think about a disk failure in RAID 10. There is no complex parity calculation tying up the controller. When a drive dies, the system just copies the data from the surviving mirrored drive over to the new one. It is a fast, simple, 1-to-1 data copy.

Because it completely skips the parity math overhead, RAID 10 handles heavy, random read/write workloads effortlessly. If you are running highly active databases, virtualization platforms, or any performance-critical application, RAID 10 is the default choice. The recovery is straightforward, and the performance is entirely predictable.

RAID 10 and Failure Domains

RAID 10 also changes how an array actually breaks.

In a parity array, every single disk is tied into the same math equation across the entire array. RAID 10 breaks your storage into much smaller “failure domains.” You can actually lose multiple disks in a RAID 10 array without dropping any data as long as you don’t lose both disks in the exact same mirrored pair.

Because the failure risk is localized to just those small pairs, your recovery is faster, and a cascading failure is far less likely to wipe out your entire system.

The Physics Problem No RAID Level Can Ignore

All RAID configurations face a fundamental limitation.

Hard drive capacity has exploded, but mechanical reliability hasn’t improved at the same pace. A 20TB drive has roughly the same physical failure rate as a 2TB drive did a decade ago.

Because there is simply so much more data to churn through, your “vulnerability window” during a rebuild stretches from a few hours to several days. The longer that window stays open, the higher the statistical probability that a second drive will die while the array is trying to heal.

This is not a flaw in RAID design. It is a consequence of physical scaling limits.

When RAID 5 and RAID 6 Still Make Sense

So, is parity RAID dead? Not at all. You just have to be incredibly intentional about where you deploy it.

RAID 5 and RAID 6 still absolutely dominate archival storage, massive backup targets, and read-heavy environments like media streaming servers. If you have a workload where data is written once and read thousands of times and you need to squeeze every single terabyte out of your hardware budget parity RAID is exactly what you want.

RAID 6, in particular, is still the go-to for massive data silos where surviving a double-disk failure is non-negotiable. You just can’t treat parity like a “deploy and forget” solution anymore; you have to do the math on your rebuild times before you put it into production.

Why RAID 10 is the Modern Default for Performance

Storage used to be the most expensive part of the server rack. Today, downtime and latency cost infinitely more than buying a few extra hard drives.

Because of that shift, enterprise architects increasingly just bite the bullet and pay the 50% capacity tax for RAID 10. For modern, heavy workloads like dense virtualization platforms or Kubernetes container nodes where consistent I/O is critical the predictable performance and low-stress rebuilds are easily worth the hardware cost.

It highlights a massive shift in infrastructure philosophy: engineers are finally willing to trade raw storage efficiency for a good night’s sleep.

The Limits of Hardware RAID

Even RAID 10 does not address the broader limitations of traditional hardware RAID.

RAID operates strictly at the block level. It has no awareness of files, application structures, or data importance. A critical database index and a temporary log file are treated identically.

More importantly, traditional hardware RAID cannot detect silent data corruption, often referred to as bit rot. If a drive returns corrupted data without signaling a complete failure, the RAID controller assumes the block is valid. Parity calculations or mirrored copies will faithfully reproduce that corruption across the array.

RAID is highly effective at surviving complete disk failure. It is not designed to verify the integrity of the data itself.

As infrastructure scales, redundancy alone is insufficient. Systems must not only reconstruct lost blocks, but also validate the correctness of the data they store.

Hardware RAID: The Ultimate Transitional Tech

RAID 5, RAID 6, and RAID 10 pushed traditional disk-level redundancy close to its practical limits. They are impressive pieces of engineering, using parity mathematics and brute-force reconstruction to keep enterprise systems alive during the data explosion of the 2000s and 2010s.

But the complexity required to sustain large hardware arrays today reveals something important: redundancy has outgrown the physical server chassis.RAID solved the scaling crisis for an entire era of IT. In doing so, it proved that the future of redundancy doesn’t live in a hardware controller it lives in the software.

Looking Ahead to Part 3: Redundancy Moves Up the Stack

Parity RAID represents the peak of traditional hardware engineering. As infrastructure becomes distributed and software-defined, it is encountering architectural limits.

Part 3 leaves the hardware controller behind and moves up the stack to examine the systems reshaping modern storage:

- ZFS: A filesystem that integrates redundancy and end-to-end checksumming directly into storage management.

- Ceph: A distributed platform that reconstructs missing data across entire clusters rather than single servers.

- Cloud object storage: Services like Amazon S3 that use erasure coding to protect data across multiple physical locations.

These systems did not eliminate RAID’s ideas. They extended them into software.