RAID 0 & RAID 1: Speed, Simplicity, and Why They Still Exist

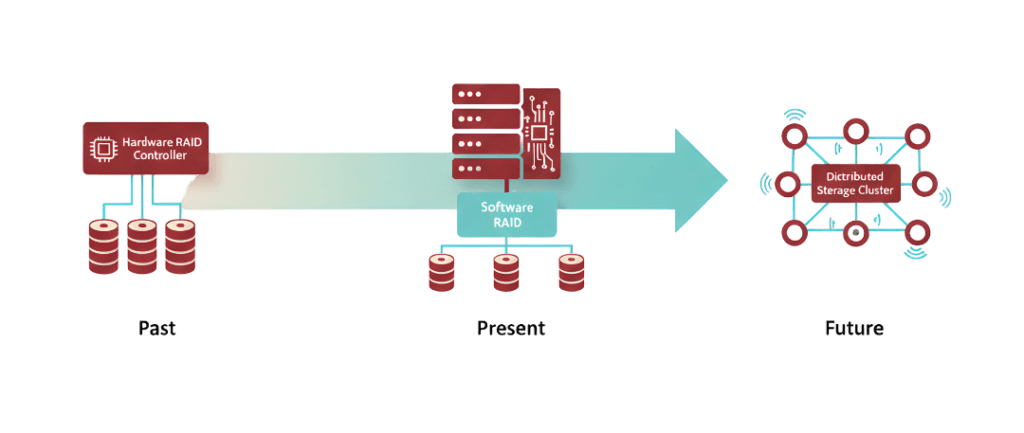

Storage engineering has always been a balancing act between performance, reliability, and cost. RAID was one of the earliest attempts to solve that equation. Decades later, cloud storage replicates data across entire data centers, NVMe drives push performance into territory that used to require multi-disk arrays, and distributed storage platforms are redefining redundancy itself.

So the obvious question shows up:

If modern storage is so advanced, why are engineers still building RAID arrays inside servers and virtual machines?

The answer is simple but uncomfortable.

Infrastructure evolves, but core problems rarely disappear. They just move higher in the stack. RAID still solves very specific problems extremely well, especially when those problems demand predictable performance and deterministic failure behavior.

This article explores the two simplest RAID levels, RAID 0 and RAID 1, not as historical relics but as foundational redundancy models that still influence how modern storage systems are designed. Understanding them is essential because nearly every modern storage technology, from ZFS to distributed object storage, builds on the same philosophical principles.

Why RAID Was Invented in the First Place

Early disk storage was fragile, expensive, and painfully slow. Enterprise systems needed larger capacity, faster throughput, and protection against disk failure, but adding individual disks introduced new risks. More disks meant higher failure probability.

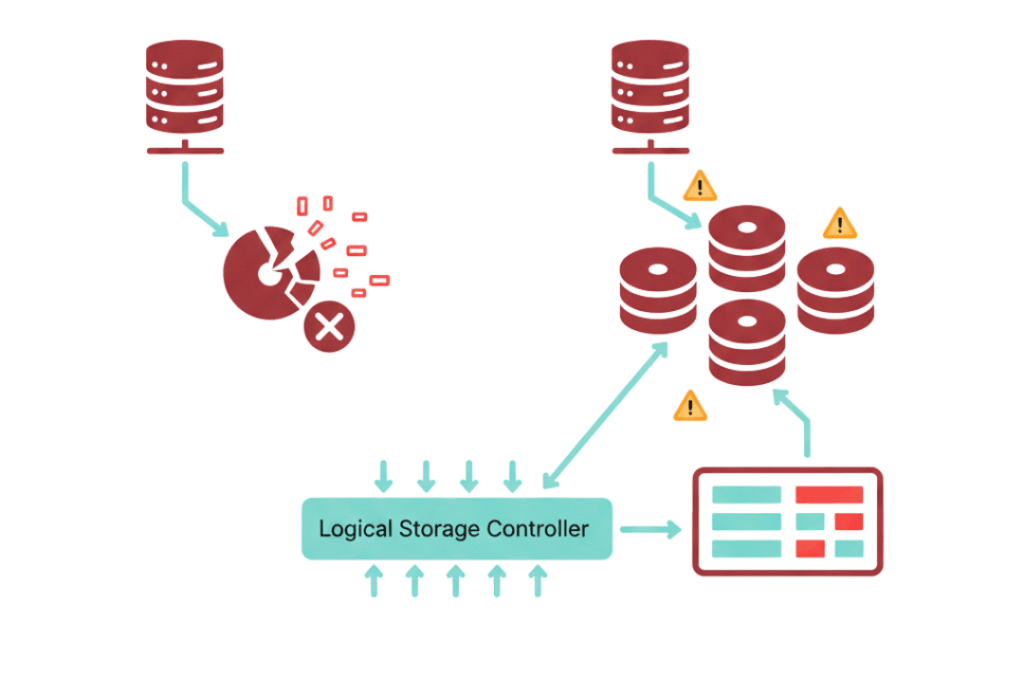

RAID, short for Redundant Array of Independent Disks, was introduced to solve this paradox. Instead of treating disks as isolated storage units, RAID grouped them into logical arrays that behaved like a single device. That grouping allowed engineers to either improve performance, improve reliability, or balance both depending on configuration.

The first RAID implementations focused on two fundamental ideas:

- Splitting data across disks to improve performance

- Duplicating data across disks to improve reliability

Those ideas eventually became RAID 0 and RAID 1, the simplest and arguably most conceptually important RAID levels ever created.

RAID 0 – Performance Through Parallelism

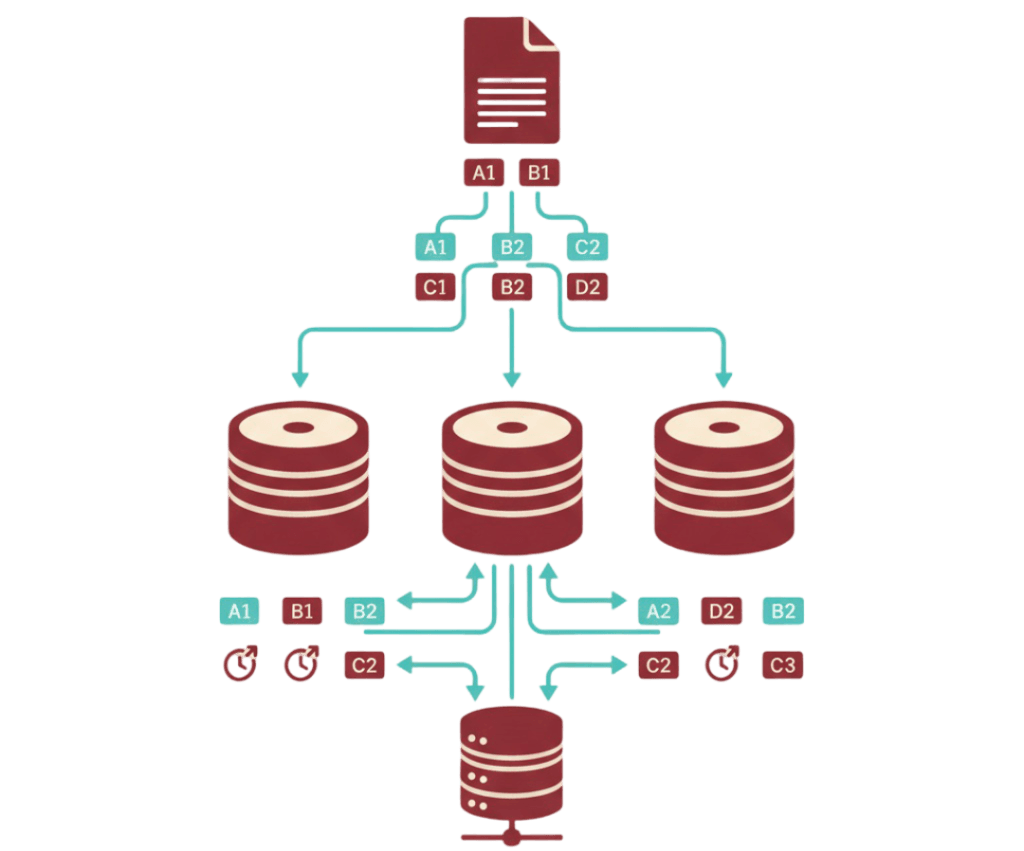

RAID 0 uses a technique called striping. Instead of writing data to one disk at a time, RAID 0 splits data into blocks and distributes those blocks across multiple disks simultaneously.

In practical terms, if two disks exist in a RAID 0 array, every write operation is split into two operations, one for each disk. With four disks, the data is distributed across four independent storage paths.

Why Striping Improves Performance

Disk operations have always been constrained by physical limitations. Traditional spinning drives suffer from seek latency and rotational delays. Even modern SSDs and NVMe drives, despite massive improvements, still benefit from parallel I/O paths.

RAID 0 increases throughput by allowing multiple disks to operate simultaneously. The more disks involved, the greater the potential throughput, assuming the workload supports parallel reads and writes.

For workloads involving large file transfers, temporary processing storage, or high-speed caching layers, RAID 0 remains extremely effective. Video editing pipelines, rendering workloads, and data analytics staging environments frequently use RAID 0 because performance is the only priority.

The Risk Behind the Speed

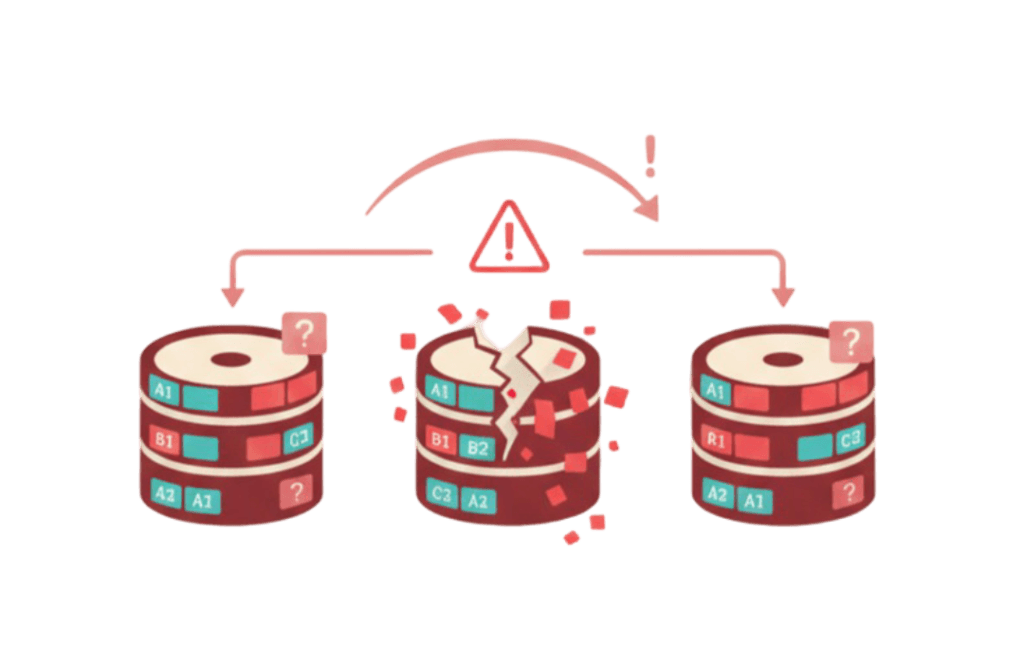

RAID 0 provides no redundancy. Every disk in the array becomes a single point of failure. If one disk fails, the entire array becomes unusable because pieces of every file exist across all disks.

This risk scales with the number of disks. A two-disk RAID 0 array has twice the failure probability of a single disk. A four-disk array increases that probability further. RAID 0 trades reliability entirely for performance, and it does so very deliberately.

RAID 0 in the Modern Storage Landscape

The rise of NVMe drives has forced engineers to reconsider whether RAID 0 is still necessary. Single NVMe drives now deliver performance that once required multi-disk arrays. However, RAID 0 is not disappearing. It is changing purpose.

Modern RAID 0 deployments often focus less on raw speed and more on parallel workload scaling. Large-scale caching layers, temporary build environments, and high-throughput scratch storage still benefit from striping across multiple devices. RAID 0 is also commonly used in environments where data persistence is irrelevant, such as transient container workloads or data processing buffers.

RAID 0 survives because performance aggregation remains a real engineering requirement, even as individual storage devices become faster.

RAID 1 – Redundancy Through Mirroring

While RAID 0 represents performance at any cost, RAID 1 represents reliability through simplicity. RAID 1 duplicates every write operation across multiple disks. Each disk contains an identical copy of the data.

If one disk fails, the system continues operating using the remaining disk without data loss or service interruption.

Why Mirroring Is Operationally Attractive

Mirroring introduces predictable failure behavior. When a disk fails in a RAID 1 array, the system continues operating normally while administrators replace the failed disk and rebuild the mirror.

This simplicity makes RAID 1 extremely attractive for:

- Operating system boot volumes

- Critical database storage

- Configuration storage

- Small business server deployments

Unlike parity-based RAID levels, RAID 1 rebuilds involve copying data from a healthy disk rather than recalculating parity information. This often results in faster and more predictable rebuild operations.

RAID 1 Performance Characteristics

RAID 1 is often misunderstood as purely reliability focused, but it also influences performance in subtle ways.

Write performance typically mirrors single-disk performance because every write must be committed to each mirrored disk. However, read performance can improve because the system can retrieve data from multiple disks simultaneously. Some RAID implementations distribute read requests across mirrored disks, improving read throughput and latency under load.

While RAID 1 sacrifices storage efficiency by halving usable capacity, many production environments accept that cost in exchange for operational stability.

RAID 1 in Modern Infrastructure

Despite massive changes in storage architecture, RAID 1 remains one of the most widely deployed RAID configurations in enterprise infrastructure. Many production servers still use mirrored boot disks because they provide simple, deterministic recovery behavior.

Cloud infrastructure has not eliminated this need. Even though cloud providers replicate data internally, mirrored operating system volumes allow administrators to control recovery processes directly inside virtual machines. This control becomes especially valuable for systems requiring predictable failover behavior or strict uptime requirements.

RAID 1 continues to exist because reliability remains easier to manage when failure models are simple and transparent.

The Modern Relevance Question

With cloud providers offering built-in storage redundancy and NVMe drives delivering massive performance, it is fair to question whether local RAID still matters.

The answer depends heavily on workload design.

RAID 0 remains relevant when performance aggregation across multiple storage devices provides measurable benefits. RAID 1 remains relevant when administrators need direct control over redundancy and recovery behavior at the operating system level.

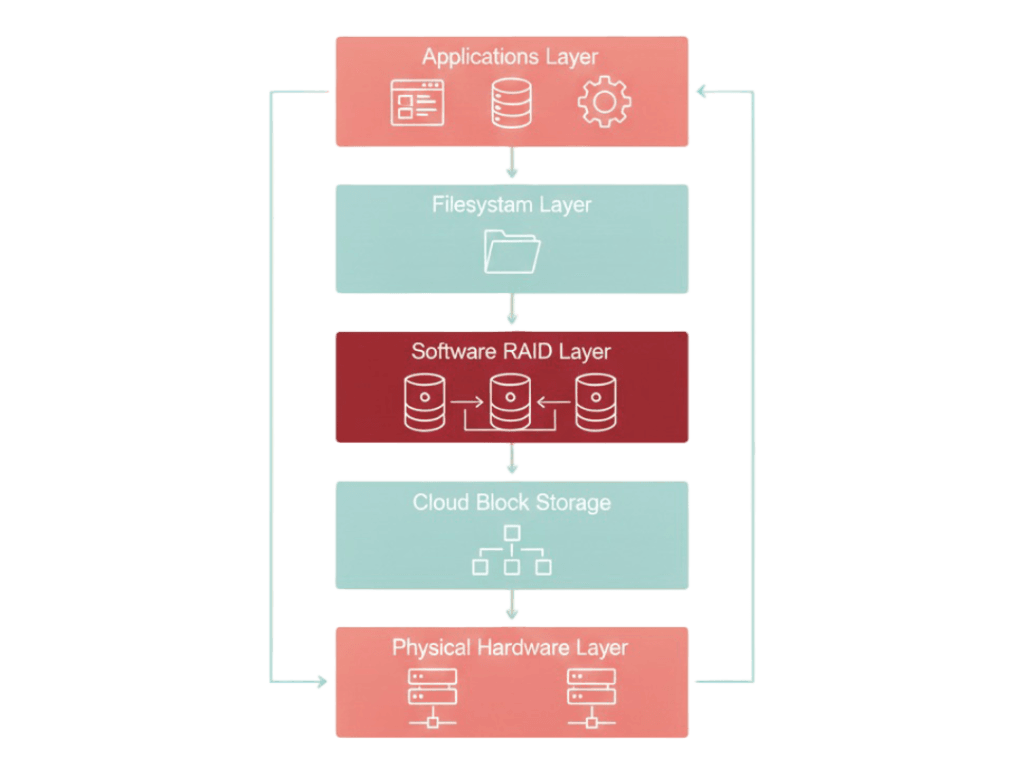

However, both RAID levels also reveal an important trend. Redundancy is slowly moving upward in the infrastructure stack. Instead of protecting individual disks, modern systems increasingly focus on protecting data across nodes, clusters, and geographic regions.

RAID 0 and RAID 1 still exist because they represent the most fundamental expressions of performance scaling and data protection. But they also hint at the limitations that more advanced storage technologies are attempting to solve.

RAID’s Strength Is Its Predictability

One reason RAID 0 and RAID 1 continue to survive in production environments is predictability. Engineers understand how they fail, how they recover, and how they perform under stress. That operational transparency is valuable in environments where unexpected behavior can cascade into service outages.

More advanced storage systems introduce additional layers of abstraction that provide greater resilience and scalability, but they also introduce complexity. RAID remains attractive in scenarios where simplicity reduces operational risk.

Where RAID 0 and RAID 1 Still Make Sense

Despite evolving storage technologies, these RAID levels continue to appear in modern infrastructure for several reasons.

RAID 0 is still used for high-performance temporary storage, analytics processing pipelines, and caching layers where data can be regenerated. RAID 1 remains common in boot disk redundancy, small production databases, and environments requiring deterministic failure recovery.

These use cases demonstrate that RAID is not disappearing. It is becoming more specialized as new storage paradigms emerge.

The Larger Story – Redundancy Is Moving Up the Stack

RAID began as a disk-level redundancy solution. Modern infrastructure increasingly focuses on redundancy at the filesystem, cluster, and application layers. Technologies like ZFS integrate redundancy directly into filesystems, while distributed storage platforms replicate data across multiple nodes.

This shift does not eliminate RAID. It expands redundancy thinking beyond hardware boundaries. RAID 0 and RAID 1 remain foundational concepts that help engineers understand how data distribution and duplication behave under real workloads.

Looking Ahead

RAID 0 and RAID 1 represent the simplest redundancy strategies ever implemented, yet their core ideas still shape modern storage design. Striping shows how performance can scale through parallelism. Mirroring shows how reliability can be achieved through duplication.

That simplicity holds as long as storage remains relatively small. Once disk counts rise and capacities grow into tens or hundreds of terabytes, the cost of mirroring becomes hard to justify, and the failure risk of pure striping becomes unacceptable.

This is where parity enters the picture.

Part 2 of this series examines RAID 5, RAID 6, and RAID 10 designs created to scale redundancy beyond small systems. These configurations solve real capacity problems, but they also introduce rebuild risk, performance penalties, and failure modes that don’t show up at smaller scales.