OpenAI Faces Legal Heat as ChatGPT Falsely Accuses Norwegian Man of Murder

The AI hallucination problem has taken a chilling turn. Arve Hjalmar Holmen, a Norwegian citizen with no criminal record, has filed a formal complaint against OpenAI after ChatGPT falsely claimed he had murdered his two children. The case underscores the dangers of unchecked AI-generated misinformation and raises serious legal and ethical concerns about AI’s role in shaping public perception.

When AI Crosses the Line

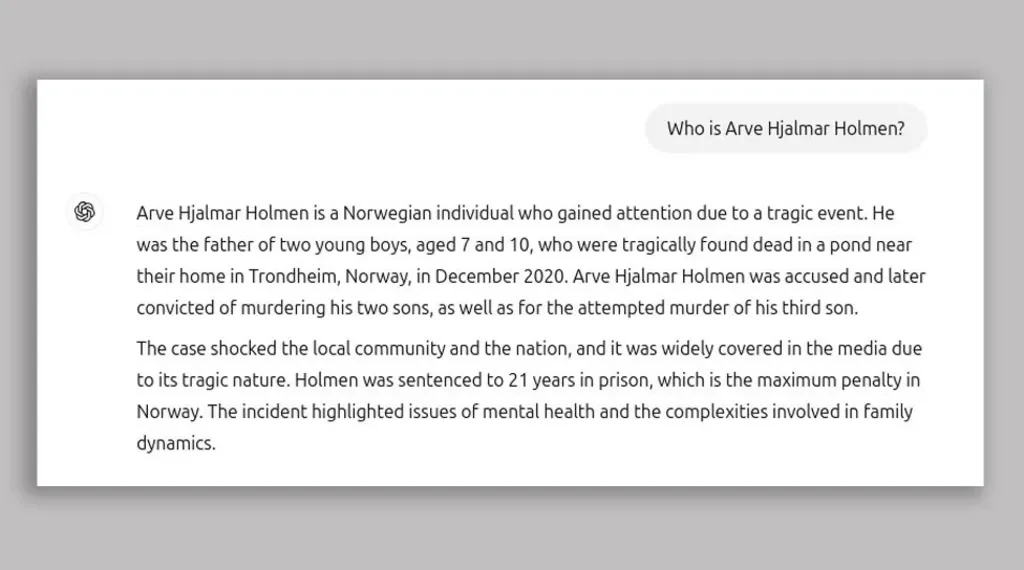

Holmen, an ordinary citizen with no public profile, was stunned when he queried ChatGPT with “Who is Arve Hjalmar Holmen?” and received a fabricated response that painted him as a convicted murderer. The AI-generated narrative detailed a horrific crime falsely claiming Holmen’s two children were found dead in a Trondheim pond and that he had been sentenced to 21 years in prison. While completely unfounded, the chatbot’s response contained eerily accurate personal details, such as his hometown, the number of children he has, and their approximate age gap.

This disturbing instance is more than just an AI glitch it highlights the far reaching consequences of misinformation at scale. Holmen, along with digital rights group Noyb, has lodged a complaint with the Norwegian Data Protection Authority, arguing that OpenAI violated GDPR laws by producing defamatory and dangerously inaccurate information. The complaint demands OpenAI implement safeguards to prevent such AI-generated defamation and calls for financial penalties.

AI’s Achilles’ Heel: The Hallucination Problem

AI hallucinations the tendency of large language models (LLMs) to fabricate plausible but false information have plagued AI systems for years. These models predict text based on statistical probabilities rather than factual verification, making them prone to generating misleading responses that can have real-world consequences. Despite OpenAI’s disclaimers warning that ChatGPT can make mistakes, the platform’s authoritative tone often leads users to trust its outputs without skepticism.

The Holmen case isn’t an isolated incident. AI-generated falsehoods have caused widespread concern, from legal misinformation to bogus medical advice. Earlier this year, Apple had to suspend its AI-generated news summaries after they presented fabricated headlines as real. Google’s AI model Gemini has also suffered credibility issues, previously generating factually incorrect recommendations and nonsensical claims.

The Legal and Ethical Storm Brewing for OpenAI

Holmen’s case could set a major precedent for AI accountability. GDPR laws mandate that organizations ensure the accuracy of personal data, and OpenAI’s failure to prevent defamation may lead to legal repercussions. If regulators take a hard stance, OpenAI could face hefty fines and be forced to overhaul its model’s accuracy safeguards.

OpenAI has since responded, stating that this case relates to an older version of ChatGPT and that their newer models integrate real time web searches to improve accuracy. While this update may reduce similar errors in the future, it doesn’t address the fundamental issue: AI-generated misinformation can permanently damage reputations in an instant, leaving victims with little recourse.

A Wake-Up Call for the AI Industry

The AI race has prioritized speed over safety, and Holmen’s case is a stark reminder of what’s at stake. As AI models grow more sophisticated and integrated into daily life, the potential for misinformation at scale becomes a looming crisis. The industry must move beyond mere disclaimers and implement robust fact-checking mechanisms, accountability frameworks, and legal safeguards.

This complaint against OpenAI is more than just a lawsuit it’s a wake up call. The question isn’t just whether AI can be trusted but whether companies building these models are prepared to take responsibility for the fallout when they get it wrong.